Introduction to Algorithms - What are they and how are they useful?

#3B1B #SoMe2 This is my submission for this year's SoME, SoME2!! I hope you enjoy, and please feel free to leave any comments. Any feedback is hugely appreciated~! ーーーーーーーーーーーーーーーーーーーーーーー Time Stamps: 00:00 Intro 00:37 Introduction to Algorithms 03:47 Exploring Algorithms - Binary Searc

From playlist Summer of Math Exposition 2 videos

Adaptive Sampling via Sequential Decision Making - András György

The workshop aims at bringing together researchers working on the theoretical foundations of learning, with an emphasis on methods at the intersection of statistics, probability and optimization. Lecture blurb Sampling algorithms are widely used in machine learning, and their success of

From playlist The Interplay between Statistics and Optimization in Learning

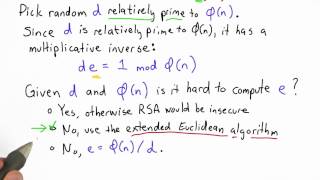

Compute E Solution - Applied Cryptography

This video is part of an online course, Applied Cryptography. Check out the course here: https://www.udacity.com/course/cs387.

From playlist Applied Cryptography

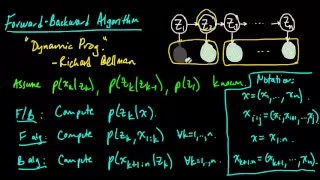

(ML 14.6) Forward-Backward algorithm for HMMs

The Forward-Backward algorithm for a hidden Markov model (HMM). How the Forward algorithm and Backward algorithm work together. Discussion of applications (inference, parameter estimation, sampling from the posterior, etc.).

From playlist Machine Learning

Raúl Tempone: Adaptive strategies for Multilevel Monte Carlo

Abstract: We will first recall, for a general audience, the use of Monte Carlo and Multi-level Monte Carlo methods in the context of Uncertainty Quantification. Then we will discuss the recently developed Adaptive Multilevel Monte Carlo (MLMC) Methods for (i) It Stochastic Differential Equ

From playlist Probability and Statistics

Alina Ene: Adaptive gradient descent methods for constrained optimization

Adaptive gradient descent methods, such as the celebrated Adagrad algorithm (Duchi, Hazan, and Singer; McMahan and Streeter) and ADAM algorithm (Kingma and Ba), are some of the most popular and influential iterative algorithms for optimizing modern machine learning models. Algorithms in th

From playlist Workshop: Continuous approaches to discrete optimization

Jana Cslovjecsek: Efficient algorithms for multistage stochastic integer programming using proximity

We consider the problem of solving integer programs of the form min {c^T x : Ax = b; x geq 0}, where A is a multistage stochastic matrix. We give an algorithm that solves this problem in fixed-parameter time f(d; ||A||_infty) n log^O(2d) n, where f is a computable function, d is the treed

From playlist Workshop: Parametrized complexity and discrete optimization

Algorithms Explained: What is an Algorithm?

This video defines what an algorithm is, distinguishes algorithms from recipes and functions and gives some examples of algorithms. This is the first video in an "Algorithms Explained" series that discusses algorithms at a conceptual level. Videos in this series that discuss specific algo

From playlist Algorithms Explained

Rigorous Data Dredging...Data Analysis - Aaron Roth

Differential Privacy Symposium: Four Facets of Differential Privacy Saturday, November 12, 2016 https://www.ias.edu/differential-privacy More videos on http://video.ias.edu

From playlist Differential Privacy Symposium - November 12, 2016

Pandora's Box with Correlations: Learning and Approximation - Shuchi Chawla

Computer Science/Discrete Mathematics Seminar I Topic: Pandora's Box with Correlations: Learning and Approximation Speaker: Shuchi Chawla Affiliation: University of Wisconsin-Madison Date: April 05, 2021 For more video please visit http://video.ias.edu

From playlist Mathematics

NIPS 2011 Domain Adaptation Workshop: Discrepancy and Adaptation

Domain Adaptation Workshop: Theory and Application at NIPS 2011 Invited Talk: Discrepancy and Adaptation by Mehryar Mohri Mehryar Mohri is a Professor at the Courant Institute and a Research Consultant at Google. His current research interests include machine learning, computational

From playlist NIPS 2011 Domain Adaptation Workshop

Stanford CS330: Multi-Task and Meta-Learning, 2019 | Lecture 7 - Kate Rakelly (UC Berkeley)

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/ai Kate Rakelly (UC Berkeley) Guest Lecture in Stanford CS330 http://cs330.stanford.edu/ 0:00 Introduction 0:17 Lecture outline 1:07 Recap: meta-reinforcement lear

From playlist Stanford CS330: Deep Multi-Task and Meta Learning

Josh Bongard - A xither of xenobots: demolishing dichotomous thinking with synthetic proto-organisms

Recorded 17 February 2022. Josh Bongard of the University of Vermont presents "A xither of xenobots: demolishing dichotomous thinking with synthetic proto-organisms" at IPAM's Mathematics of Collective Intelligence Workshop. Learn more online at: http://www.ipam.ucla.edu/programs/workshops

From playlist Workshop: Mathematics of Collective Intelligence - Feb. 15 - 19, 2022.

The Visual Task Adaptation Benchmark

This paper presents a new benchmark for Visual Task Adaptation (i.e. BERT for images) and investigates several baseline methods for doing so. Abstract: Representation learning promises to unlock deep learning for the long tail of vision tasks without expansive labelled datasets. Yet, the

From playlist General Machine Learning

Discrete Optimization Under Uncertainty - Sahil Singla

Short talks by postdoctoral members Topic: Discrete Optimization Under Uncertainty. Speaker: Sahil Singla Affiliation: Member, School of Mathematics Date: October 2, 2019 For more video please visit http://video.ias.edu

From playlist Mathematics

Nexus Trimester - Sidharth Jaggi (The Chinese University of Hong Kong)

Group-testing: Together we are one Sidharth Jaggi (The Chinese University of Hong Kong) March 16, 2016 Group testing is perhaps the “simplest” class of non-linear inference problems. Broadly speaking, group-testing measurements exhibit a “threshold” behaviour, with positive test outcomes

From playlist 2016-T1 - Nexus of Information and Computation Theory - CEB Trimester

From playlist Plenary talks One World Symposium 2020

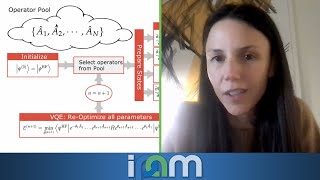

Sophia Economou - Problem-tailored variational quantum algorithms - IPAM at UCLA

Recorded 26 January 2022. Sophia Economou of Virginia Tech presents "Problem-tailored variational quantum algorithms" at IPAM's Quantum Numerical Linear Algebra Workshop. Abstract: The performance of variational quantum algorithms (VQAs) critically depends on the objective function, which

From playlist Quantum Numerical Linear Algebra - Jan. 24 - 27, 2022

Make A Combination Lock - Intro to Algorithms

This video is part of an online course, Intro to Algorithms. Check out the course here: https://www.udacity.com/course/cs215.

From playlist Introduction to Algorithms