Tv Reclame van de De Belastingdienst Telsell parodie

From playlist awareness

5 Best Practices In DevOps Culture | What is DevOps? | Edureka

🔥𝐄𝐝𝐮𝐫𝐞𝐤𝐚 𝐃𝐞𝐯𝐎𝐩𝐬 𝐏𝐨𝐬𝐭 𝐆𝐫𝐚𝐝𝐮𝐚𝐭𝐞 𝐏𝐫𝐨𝐠𝐫𝐚𝐦 𝐰𝐢𝐭𝐡 𝐏𝐮𝐫𝐝𝐮𝐞 𝐔𝐧𝐢𝐯𝐞𝐫𝐬𝐢𝐭𝐲: https://www.edureka.co/executive-programs/purdue-devops This tutorial explains what is DevOps. It will help you understand some of its best practices in DevOps culture. This video will also provide an insight into how different

From playlist Webinars by Edureka!

Doodh Doodh Wonderful Doodh - Amul Ad

Doodh Doodh Wonderful Doodh piyo glass full- Amul Ad

From playlist Advertisements

From playlist Getting Started in Cryo-EM

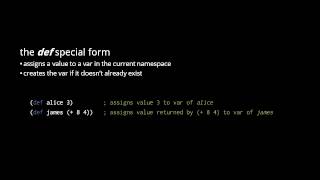

Clojure - the Reader and Evaluator (4/4)

Part of a series teaching the Clojure language. For other programming topics, visit http://codeschool.org

From playlist the Clojure language

AdaBoost is one of those machine learning methods that seems so much more confusing than it really is. It's really just a simple twist on decision trees and random forests. NOTE: This video assumes you already know about Decision Trees... https://youtu.be/_L39rN6gz7Y ...and Random Forests

From playlist StatQuest

Machine Learning Lecture 33 "Boosting Continued" -Cornell CS4780 SP17

Lecture Notes: http://www.cs.cornell.edu/courses/cs4780/2018fa/lectures/lecturenote19.html

From playlist CORNELL CS4780 "Machine Learning for Intelligent Systems"

REFERENCES [1] A Short Introduction to Boosting: https://cseweb.ucsd.edu/~yfreund/papers/IntroToBoosting.pdf [2] A Theory of the Learnable (Valiant, 1984): http://web.mit.edu/6.435/www/Valiant84.pdf. This introduced the PAC Learning model [3] PAC Learning Model: https://www.youtube.com/wa

From playlist Algorithms and Concepts

Gradient Boost Part 1 (of 4): Regression Main Ideas

Gradient Boost is one of the most popular Machine Learning algorithms in use. And get this, it's not that complicated! This video is the first part in a series that walks through it one step at a time. This video focuses on the main ideas behind using Gradient Boost to predict a continuous

From playlist StatQuest

AdaBoost in Python - Machine Learning From Scratch 13 - Python Tutorial

Get my Free NumPy Handbook: https://www.python-engineer.com/numpybook In this Machine Learning from Scratch Tutorial, we are going to implement the AdaBoost algorithm using only built-in Python modules and numpy. AdaBoost is an ensemble technique that attempts to create a strong classifie

From playlist Machine Learning from Scratch - Python Tutorials

Ensemble Learning | Ensemble Learning In Machine Learning | Machine Learning Tutorial | Simplilearn

🔥Artificial Intelligence Engineer Program (Discount Coupon: YTBE15): https://www.simplilearn.com/masters-in-artificial-intelligence?utm_campaign=EnsembleLearning&utm_medium=Descriptionff&utm_source=youtube 🔥Professional Certificate Program In AI And Machine Learning: https://www.simplilear

AdaBoost : Data Science Concepts

How do we put together lots of weak models into a STRONG model?

From playlist Data Science Concepts

Clojure - the Reader and Evaluator (2/4)

Part of a series teaching the Clojure language. For other programming topics, visit http://codeschool.org

From playlist the Clojure language

AdaBoost (Adaptive Boosting) ensemble learning technique for classification

From playlist cs273a

Machine Learning Lecture 34 "Boosting / Adaboost" -Cornell CS4780 SP17

Lecture Notes: http://www.cs.cornell.edu/courses/cs4780/2018fa/lectures/lecturenote19.html

From playlist CORNELL CS4780 "Machine Learning for Intelligent Systems"

How to Work with Wikipedia Sandbox

This is a short video that helps students or editors of Wikipedia to access and edit in the Sandbox of their user account. This was made for the Wiki Edu Project. I do not own or hold copyright over any aspect of the Wikipedia site or its pages. ***There is no audio***

From playlist Wikipedia Education Dashboard Tutorials

From playlist Machine Learning