(ML 3.1) Decision theory (Basic Framework)

A simple example to motivate decision theory, along with definitions of the 0-1 loss and the square loss. A playlist of these Machine Learning videos is available here: http://www.youtube.com/my_playlists?p=D0F06AA0D2E8FFBA

From playlist Machine Learning

In this video, you’ll learn strategies for making decisions large and small. Visit https://edu.gcfglobal.org/en/problem-solving-and-decision-making/ for our text-based tutorial. We hope you enjoy!

From playlist Making Decisions

Why We May Be Angry Rather Than Sad

Behind many of our moods of depression lies something surprising: anger. Anger that hasn’t had the chance to know and express itself frequently curdles into depression – something we should bear in mind when trying to dig ourselves out of our saddest states of mind. If you like our films,

From playlist SELF

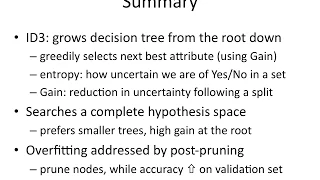

From playlist Decision Tree Learning

In this video I talk about having regrets in mathematics and in life in general. I also talk about how to deal with them. What do you all think? Do you have regrets in mathematics? How do you deal with them? Please leave any comments or questions in the comment section below. If you enjo

From playlist Inspiration and Advice

Powered by https://www.numerise.com/ Formulating a linear programming problem

From playlist Linear Programming - Decision Maths 1

(ML 11.4) Choosing a decision rule - Bayesian and frequentist

Choosing a decision rule, from Bayesian and frequentist perspectives. To make the problem well-defined from the frequentist perspective, some additional guiding principle is introduced such as unbiasedness, minimax, or invariance.

From playlist Machine Learning

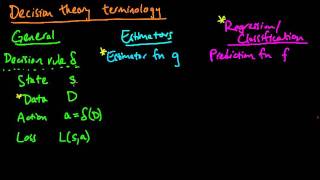

(ML 11.2) Decision theory terminology in different contexts

Comparison of decision theory terminology and notation in three different contexts: in general, for estimators, and for regression/classification.

From playlist Machine Learning

5f Machine Learning: Non-cooperative Game Theory

A lecture on non-cooperative game theory including a basic introduction up to pure and mixed strategy Nash equilibrium and applications. I was motivated by the recent use of Shapley value from cooperative game theory for machine learning model explainability.

From playlist Machine Learning

Design thinking can improve anything from a water bottle to a community water system. See how design thinking improves the creative process, from Professor Stefanos Zenios: http://stanford.io/1mgkHGR

From playlist More

Some Theoretical Results on Model-Based Reinforcement Learning by Mengdi Wang

Program Advances in Applied Probability II (ONLINE) ORGANIZERS: Vivek S Borkar (IIT Bombay, India), Sandeep Juneja (TIFR Mumbai, India), Kavita Ramanan (Brown University, Rhode Island), Devavrat Shah (MIT, US) and Piyush Srivastava (TIFR Mumbai, India) DATE & TIME 04 January 2021 to

From playlist Advances in Applied Probability II (Online)

Stanford CS234: Reinforcement Learning | Winter 2019 | Lecture 13 - Fast Reinforcement Learning III

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/ai Professor Emma Brunskill, Stanford University http://onlinehub.stanford.edu/ Professor Emma Brunskill Assistant Professor, Computer Science Stanford AI for Hu

From playlist Stanford CS234: Reinforcement Learning | Winter 2019

Robust Design Discovery and Exploration in Bayesian Optimization

A Google TechTalk, presented by Ilija Bogunovic, 2022/10/04 BayesOpt Speaker Series - ABSTRACT: Whether in biological design, causal discovery, material production, or physical sciences, one often faces decisions regarding which new data to collect or which experiments to perform. There is

From playlist Google BayesOpt Speaker Series 2021-2022

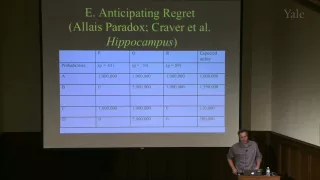

Episodic Memory, Time, and Agency: Some Constraints from Neuropsychology

People with episodic amnesia are frequently said to be stuck in time, trapped in a permanent present tense, and altogether lacking a subjective sense of temporality. These claims are grounded in the well-characterized inability of persons with episodic amnesia to perform much above floor o

From playlist Franke Program in Science and the Humanities

Author Interview - ACCEL: Evolving Curricula with Regret-Based Environment Design

#ai #accel #evolution This is an interview with the authors Jack Parker-Holder and Minqi Jiang. Original Paper Review Video: https://www.youtube.com/watch?v=povBDxUn1VQ Automatic curriculum generation is one of the most promising avenues for Reinforcement Learning today. Multiple approac

From playlist Reinforcement Learning

Emotion (Part 2) || Cognitive Neuroscience (PSY 315W)

This is a recorded version of a livestream distance learning lecture, recorded during the coronavirus pandemic of 2020. Topics include: amygdala and fear, amygdala connection, and orbitofrontal cortex. I claim no ownership over any music, videos, or advertisements shown herein. All were

From playlist Cognitive Neuroscience Lectures

Algorithmic Game Theory: Two Vignettes

(March 11, 2009) Tim Roughgarden talks about algorithmic game theory and illustrates two of the main themes in the field via specific examples: performance guarantees for systems with autonomous users, illustrated by selfish routing in communication networks; and algorithmic mechanism desi

From playlist Engineering

Multi-armed Bandits Revisited by P R Kumar

PROGRAM: ADVANCES IN APPLIED PROBABILITY ORGANIZERS: Vivek Borkar, Sandeep Juneja, Kavita Ramanan, Devavrat Shah, and Piyush Srivastava DATE & TIME: 05 August 2019 to 17 August 2019 VENUE: Ramanujan Lecture Hall, ICTS Bangalore Applied probability has seen a revolutionary growth in resear

From playlist Advances in Applied Probability 2019

This Is The Best Way To Recover From Failure: If At First You Don't Succeed, Just Embrace It | TIME

Embracing the sting of failure may not sound enjoyable — but new research shows it's the best way to learn from mistakes. A study in the Journal of Behavioral Decision Making found that people who ruminated on their emotions about failure were likely to try harder to correct their mistakes

From playlist Your Career