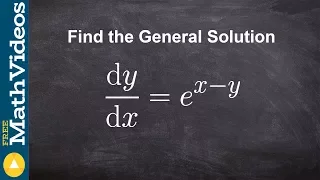

How to solve a differentialble equation by separating the variables

Learn how to solve the particular solution of differential equations. A differential equation is an equation that relates a function with its derivatives. The solution to a differential equation involves two parts: the general solution and the particular solution. The general solution give

From playlist Solve Differential Equation (Particular Solution) #Integration

Calculus: We present a procedure for solving word problems on optimization using derivatives. Examples include the fence problem and the minimum distance from a point to a line problem.

From playlist Calculus Pt 1: Limits and Derivatives

How to solve differentiable equations with logarithms

Learn how to solve the particular solution of differential equations. A differential equation is an equation that relates a function with its derivatives. The solution to a differential equation involves two parts: the general solution and the particular solution. The general solution give

From playlist Differential Equations

Find the particular solution given the conditions and second derivative

Learn how to solve the particular solution of differential equations. A differential equation is an equation that relates a function with its derivatives. The solution to a differential equation involves two parts: the general solution and the particular solution. The general solution give

From playlist Solve Differential Equation (Particular Solution) #Integration

Particular solution of differential equations

Learn how to solve the particular solution of differential equations. A differential equation is an equation that relates a function with its derivatives. The solution to a differential equation involves two parts: the general solution and the particular solution. The general solution give

From playlist Solve Differential Equation (Particular Solution) #Integration

Constraint Satisfaction Problems in Python

Author David Kopec discusses Constraint-Satisfaction Problems in Python. To learn more, see David's book Classic Computer Science Problems in Python | http://mng.bz/95B1 This video is also available on Manning's liveVideo platform: http://mng.bz/j2wP Use the discount code WATCHKOPEC40 f

From playlist Python

Constraint-Satisfaction Problems in Python

Author David Kopec discusses Constraint-Satisfaction Problems in Python. To learn more, see David's book Classic Computer Science Problems in Python | http://mng.bz/opAp Use the discount code TWITKOPE40 for 40% off of any Manning title. A large number of problems which computational too

From playlist Python

Solve an equation with a rational term

👉 Learn how to solve rational equations. A rational expression is an expression in the form of a fraction where the numerator and/or the denominator are/is an algebraic expression. There are many ways to solve rational equations, one of the ways is by multiplying all the individual rationa

From playlist How to Solve Rational Equations with an Integer

Stochastic Local Search and the Lovasz Local Lemma - Fotios Iliopoulos

Short talks by postdoctoral members Topic: Stochastic Local Search and the Lovasz Local Lemma Speaker: Fotios Iliopoulos Affiliation: Member, School of Mathematics Date: September 25 For more video please visit http://video.ias.edu

From playlist Mathematics

What are the restrictions we put on a rational expression

👉 Learn about solving rational equations. A rational expression is an expression in the form of a fraction where the numerator and/or the denominator are/is an algebraic expression. There are many ways to solve rational equations, one of the ways is by multiplying all the individual ration

From playlist How to Solve Rational Equations | Learn About

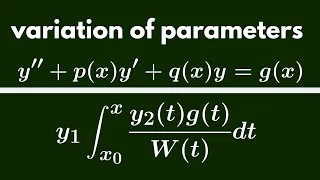

Differential Equations | Variation of Parameters.

We derive the general form for a solution to a differential equation using variation of parameters. http://www.michael-penn.net

From playlist Differential Equations

General solution of a separable equation

Learn how to solve the particular solution of differential equations. A differential equation is an equation that relates a function with its derivatives. The solution to a differential equation involves two parts: the general solution and the particular solution. The general solution give

From playlist Differential Equations

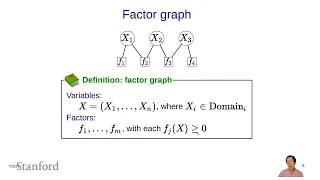

Constraint Satisfaction Problems (CSPs) 1 - Overview | Stanford CS221: AI (Autumn 2021)

For more information about Stanford's Artificial Intelligence professional and graduate programs visit: https://stanford.io/ai Associate Professor Percy Liang Associate Professor of Computer Science and Statistics (courtesy) https://profiles.stanford.edu/percy-liang Assistant Professor

From playlist Stanford CS221: Artificial Intelligence: Principles and Techniques | Autumn 2021

Bartolomeo Stellato - Learning for Decision-Making Under Uncertainty - IPAM at UCLA

Recorded 01 March 2023. Bartolomeo Stellato of Princeton University, Operations Research and Financial Engineering, presents "Learning for Decision-Making Under Uncertainty" at IPAM's Artificial Intelligence and Discrete Optimization Workshop. Abstract: We present two data-driven methods t

From playlist 2023 Artificial Intelligence and Discrete Optimization

Melanie Zeilinger: "Learning-based Model Predictive Control - Towards Safe Learning in Control"

Intersections between Control, Learning and Optimization 2020 "Learning-based Model Predictive Control - Towards Safe Learning in Control" Melanie Zeilinger - ETH Zurich & University of Freiburg Abstract: The question of safety when integrating learning techniques in control systems has

From playlist Intersections between Control, Learning and Optimization 2020

Constraint Satisfaction Problems (CSPs) 2 - Definitions | Stanford CS221: AI (Autumn 2021)

For more information about Stanford's Artificial Intelligence professional and graduate programs visit: https://stanford.io/ai Associate Professor Percy Liang Associate Professor of Computer Science and Statistics (courtesy) https://profiles.stanford.edu/percy-liang Assistant Professor

From playlist Stanford CS221: Artificial Intelligence: Principles and Techniques | Autumn 2021

Optimization - Lecture 3 - CS50's Introduction to Artificial Intelligence with Python 2020

00:00:00 - Introduction 00:00:15 - Optimization 00:01:20 - Local Search 00:07:24 - Hill Climbing 00:29:43 - Simulated Annealing 00:40:43 - Linear Programming 00:51:03 - Constraint Satisfaction 00:59:17 - Node Consistency 01:03:03 - Arc Consistency 01:16:53 - Backtracking Search This cours

From playlist CS50's Introduction to Artificial Intelligence with Python 2020

Alexandra Kolla - Quantum Approximate Optimization Algorithm (QAOA) and Local Max-Cut - IPAM at UCLA

Recorded 27 January 2022. Alexandra Kolla of the University of California, Santa Cruz, presents "Quantum Approximate Optimization Algorithm (QAOA) and Local Max-Cut" at IPAM's Quantum Numerical Linear Algebra Workshop. Abstract: We will discuss methods to determine how good of an approxima

From playlist Quantum Numerical Linear Algebra - Jan. 24 - 27, 2022

Learning to solve and graph an absolute value inequality with a rational quantity

👉 Learn how to solve multi-step absolute value inequalities. The absolute value of a number is the positive value of the number. For instance, the absolute value of 2 is 2 and the absolute value of -2 is also 2. To solve an absolute value inequality where there are more terms apart from th

From playlist Solve Absolute Value Inequalities | Hard