Bayesian hierarchical modeling

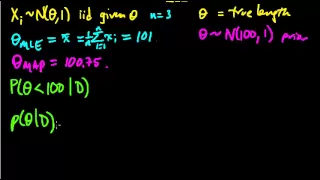

Bayesian hierarchical modelling is a statistical model written in multiple levels (hierarchical form) that estimates the parameters of the posterior distribution using the Bayesian method. The sub-models combine to form the hierarchical model, and Bayes' theorem is used to integrate them with the observed data and account for all the uncertainty that is present. The result of this integration is the posterior distribution, also known as the updated probability estimate, as additional evidence on the prior distribution is acquired. Frequentist statistics may yield conclusions seemingly incompatible with those offered by Bayesian statistics due to the Bayesian treatment of the parameters as random variables and its use of subjective information in establishing assumptions on these parameters. As the approaches answer different questions the formal results aren't technically contradictory but the two approaches disagree over which answer is relevant to particular applications. Bayesians argue that relevant information regarding decision-making and updating beliefs cannot be ignored and that hierarchical modeling has the potential to overrule classical methods in applications where respondents give multiple observational data. Moreover, the model has proven to be robust, with the posterior distribution less sensitive to the more flexible hierarchical priors. Hierarchical modeling is used when information is available on several different levels of observational units. For example, in epidemiological modeling to describe infection trajectories for multiple countries, observational units are countries, and each country has its own temporal profile of daily infected cases. In decline curve analysis to describe oil or gas production decline curve for multiple wells, observational units are oil or gas wells in a reservoir region, and each well has each own temporal profile of oil or gas production rates (usually, barrels per month). Data structure for the hierarchical modeling retains nested data structure. The hierarchical form of analysis and organization helps in the understanding of multiparameter problems and also plays an important role in developing computational strategies. (Wikipedia).