Gradient Boosting : Data Science's Silver Bullet

A dive into the all-powerful gradient boosting method! My Patreon : https://www.patreon.com/user?u=49277905

From playlist Data Science Concepts

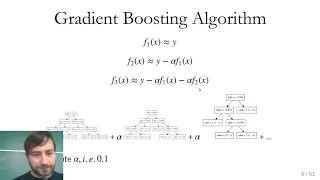

Ensembles (3): Gradient Boosting

Gradient boosting ensemble technique for regression

From playlist cs273a

Applied ML 2020 - 08 - Gradient Boosting

Materials at https://www.cs.columbia.edu/~amueller/comsw4995s20/schedule/

From playlist Applied Machine Learning 2020

Gradient Boost Part 1 (of 4): Regression Main Ideas

Gradient Boost is one of the most popular Machine Learning algorithms in use. And get this, it's not that complicated! This video is the first part in a series that walks through it one step at a time. This video focuses on the main ideas behind using Gradient Boost to predict a continuous

From playlist StatQuest

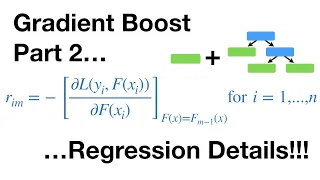

Gradient Boost Part 2 (of 4): Regression Details

Gradient Boost is one of the most popular Machine Learning algorithms in use. And get this, it's not that complicated! This video is the second part in a series that walks through it one step at a time. This video focuses on the original Gradient Boost algorithm used to predict a continuou

From playlist StatQuest

This video explains what information the gradient provides about a given function. http://mathispower4u.wordpress.com/

From playlist Functions of Several Variables - Calculus

15b Machine Learning: Gradient Boosting

Lecture on ensemble machine learning with boosting with a demonstration based on tree based boosting.

From playlist Machine Learning

REFERENCES [1] A Short Introduction to Boosting: https://cseweb.ucsd.edu/~yfreund/papers/IntroToBoosting.pdf [2] A Theory of the Learnable (Valiant, 1984): http://web.mit.edu/6.435/www/Valiant84.pdf. This introduced the PAC Learning model [3] PAC Learning Model: https://www.youtube.com/wa

From playlist Algorithms and Concepts

Applied Machine Learning 2019 - Lecture 09 - Gradient boosting; Calibration

Gradient boosting and "extreme" gradient boosting Calibration curves and calibrating classifiers with CalibratedClassifierCV. Class website with slides and more materials: https://www.cs.columbia.edu/~amueller/comsw4995s19/schedule/

From playlist Applied Machine Learning - Spring 2019

This video follows on from the discussion on linear regression as a shallow learner ( https://www.youtube.com/watch?v=cnnCrijAVlc ) and the video on derivatives in deep learning ( https://www.youtube.com/watch?v=wiiPVB9tkBY ). This is a deeper dive into gradient descent and the use of th

From playlist Introduction to deep learning for everyone

XGBoost Part 3 (of 4): Mathematical Details

In this video we dive into the nitty-gritty details of the math behind XGBoost trees. We derive the equations for the Output Values from the leaves as well as the Similarity Score. Then we show how these general equations are customized for Regression or Classification by their respective

From playlist StatQuest

KS5 - Sketching the Gradient Function

"Sketch the gradient function for a given curve, e.g. in relation to speed and acceleration."

From playlist Differentiation (AS/Beginner)

Gradient Boost Part 3 (of 4): Classification

This is Part 3 in our series on Gradient Boost. At long last, we are showing how it can be used for classification. This video gives focuses on the main ideas behind this technique. The next video in this series will focus more on the math and how it works with the underlying algorithm. T

From playlist StatQuest

Machine Learning Lecture 33 "Boosting Continued" -Cornell CS4780 SP17

Lecture Notes: http://www.cs.cornell.edu/courses/cs4780/2018fa/lectures/lecturenote19.html

From playlist CORNELL CS4780 "Machine Learning for Intelligent Systems"

Download the free PDF http://tinyurl.com/EngMathYT A basic tutorial on the gradient field of a function. We show how to compute the gradient; its geometric significance; and how it is used when computing the directional derivative. The gradient is a basic property of vector calculus. NOT

From playlist Engineering Mathematics

Ensemble Learning | Ensemble Learning In Machine Learning | Machine Learning Tutorial | Simplilearn

🔥Artificial Intelligence Engineer Program (Discount Coupon: YTBE15): https://www.simplilearn.com/masters-in-artificial-intelligence?utm_campaign=EnsembleLearning&utm_medium=Descriptionff&utm_source=youtube 🔥Professional Certificate Program In AI And Machine Learning: https://www.simplilear