A very basic overview of optimization, why it's important, the role of modeling, and the basic anatomy of an optimization project.

From playlist Optimization

Particle Swarm Optimization (PSO) - Part 1: Introduction

This video is about Particle Swarm Optimization (PSO) - Part 1: Introduction

From playlist Optimization

What Is Mathematical Optimization?

A gentle and visual introduction to the topic of Convex Optimization. (1/3) This video is the first of a series of three. The plan is as follows: Part 1: What is (Mathematical) Optimization? (https://youtu.be/AM6BY4btj-M) Part 2: Convexity and the Principle of (Lagrangian) Duality (

From playlist Convex Optimization

In this second part on Motion, we take a look at calculating the velocity and position vectors when given the acceleration vector and initial values for velocity and position. It involves as you might imagine some integration. Just remember that when calculating the indefinite integral o

From playlist Life Science Math: Vectors

What in the world is a linear program?

What is a linear program and why do we care? Today I’m going to introduce you to the exciting world of optimization, which is the mathematical field of maximizing or minimizing an objective function subject to constraints. The most fundamental topic in optimization is linear programming,

From playlist Summer of Math Exposition 2 videos

Finding Optimal Path Using Optimization Toolbox

Get a Free Trial: https://goo.gl/C2Y9A5 Get Pricing Info: https://goo.gl/kDvGHt Ready to Buy: https://goo.gl/vsIeA5 Solve the path planning problem of navigating through a vector field of wind in the least possible time. For more videos, visit http://www.mathworks.com/products/optimizati

From playlist Math, Statistics, and Optimization

13_2 Optimization with Constraints

Here we use optimization with constraints put on a function whose minima or maxima we are seeking. This has practical value as can be seen by the examples used.

From playlist Advanced Calculus / Multivariable Calculus

Numerical Optimization Algorithms: Gradient Descent

In this video we discuss a general framework for numerical optimization algorithms. We will see that this involves choosing a direction and step size at each step of the algorithm. In this video, we investigate how to choose a direction using the gradient descent method. Future videos d

From playlist Optimization

[Calculus] Optimization 1 || Lecture 34

Visit my website: http://bit.ly/1zBPlvm Subscribe on YouTube: http://bit.ly/1vWiRxW Hello, welcome to TheTrevTutor. I'm here to help you learn your college courses in an easy, efficient manner. If you like what you see, feel free to subscribe and follow me for updates. If you have any que

From playlist Calculus 1

From playlist CS294-112 Deep Reinforcement Learning Sp17

Lecture 10 | MIT 6.832 Underactuated Robotics, Spring 2009

Lecture 10: Trajectory stabilization and iterative linear quadratic regulator (iLQR) Instructor: Russell Tedrake See the complete course at: http://ocw.mit.edu/6-832s09 License: Creative Commons BY-NC-SA More information at http://ocw.mit.edu/terms More courses at http://ocw.mit.e

From playlist MIT 6.832 Underactuated Robotics, Spring 2009

Finite-Horizon, Energy-Optimal Trajectories in Unsteady Flows

This video by Kartik Krishna investigates the use of finite-horizon model predictive control (MPC) for the energy-efficient trajectory planning of an active mobile sensor in an unsteady fluid flow. Connections between the finite-time optimal trajectories and finite-time Lyapunov exponents

From playlist Research Abstracts from Brunton Lab

Regularizing Trajectory Optimization with Denoising Autoencoders (Paper Explained)

Can you plan with a learned model of the world? Yes, but there's a catch: The better your planning algorithm is, the more the errors of your world model will hurt you! This paper solves this problem by regularizing the planning algorithm to stay in high probability regions, given its exper

From playlist Papers Explained

Lecture 15 | MIT 6.832 Underactuated Robotics, Spring 2009

Lecture 15: Global policies from local policies Instructor: Russell Tedrake See the complete course at: http://ocw.mit.edu/6-832s09 License: Creative Commons BY-NC-SA More information at http://ocw.mit.edu/terms More courses at http://ocw.mit.edu

From playlist MIT 6.832 Underactuated Robotics, Spring 2009

Lecture 9 | MIT 6.832 Underactuated Robotics, Spring 2009

Lecture 9: Trajectory optimization Instructor: Russell Tedrake See the complete course at: http://ocw.mit.edu/6-832s09 License: Creative Commons BY-NC-SA More information at http://ocw.mit.edu/terms More courses at http://ocw.mit.edu

From playlist MIT 6.832 Underactuated Robotics, Spring 2009

Anca Dragan: "Learning Intended Cost Functions: Extracting all the right information from all th..."

Intersections between Control, Learning and Optimization 2020 "Learning Intended Cost Functions: Extracting all the right information from all the right places" Anca Dragan - University of California, Berkeley (UC Berkeley) Abstract: Optimal control work tends to focus on how to optimize

From playlist Intersections between Control, Learning and Optimization 2020

Stanford Seminar - Opening the Doors of (Robot) Perception - Luca Carlone

Opening the Doors of (Robot) Perception: Towards Certifiable Spatial Perception Algorithms and Systems Luca Carlone MIT February 11, 2022 Spatial perception —the robot’s ability to sense and understand the surrounding environment— is a key enabler for autonomous systems operating in co

From playlist Stanford AA289 - Robotics and Autonomous Systems Seminar

Qi Gong: "Nonlinear optimal feedback control - a model-based learning approach"

High Dimensional Hamilton-Jacobi PDEs 2020 Workshop I: High Dimensional Hamilton-Jacobi Methods in Control and Differential Games "Nonlinear optimal feedback control - a model-based learning approach" Qi Gong - University of California, Santa Cruz Abstract: Computing optimal feedback con

From playlist High Dimensional Hamilton-Jacobi PDEs 2020

Particle Swarm Optimization - Part 2: Global Best PSO

This video is about Particle Swarm Optimization - Part 2: Global Best PSO

From playlist Optimization

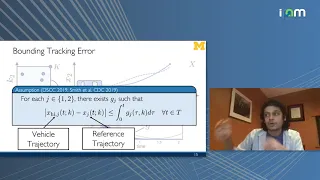

Ram Vasudevan: "Bridging the Gap Between Safety & Real-Time Performance for AV Control"

Mathematical Challenges and Opportunities for Autonomous Vehicles 2020 Workshop II: Safe Operation of Connected and Autonomous Vehicle Fleets "Bridging the Gap Between Safety and Real-Time Performance for Autonomous Vehicle Control" Ram Vasudevan - University of Michigan Abstract: Autono

From playlist Mathematical Challenges and Opportunities for Autonomous Vehicles 2020