Jana Cslovjecsek: Efficient algorithms for multistage stochastic integer programming using proximity

We consider the problem of solving integer programs of the form min {c^T x : Ax = b; x geq 0}, where A is a multistage stochastic matrix. We give an algorithm that solves this problem in fixed-parameter time f(d; ||A||_infty) n log^O(2d) n, where f is a computable function, d is the treed

From playlist Workshop: Parametrized complexity and discrete optimization

"Data-Driven Optimization in Pricing and Revenue Management" by Arnoud den Boer - Lecture 1

In this course we will study data-driven decision problems: optimization problems for which the relation between decision and outcome is unknown upfront, and thus has to be learned on-the-fly from accumulating data. This type of problems has an intrinsic tension between statistical goals a

From playlist Thematic Program on Stochastic Modeling: A Focus on Pricing & Revenue Management

Stochastic Approximation-based algorithms, when the Monte (...) - Fort - Workshop 2 - CEB T1 2019

Gersende Fort (CNRS, Univ. Toulouse) / 13.03.2019 Stochastic Approximation-based algorithms, when the Monte Carlo bias does not vanish. Stochastic Approximation algorithms, whose stochastic gradient descent methods with decreasing stepsize are an example, are iterative methods to comput

From playlist 2019 - T1 - The Mathematics of Imaging

"Data-Driven Optimization in Pricing and Revenue Management" by Arnoud den Boer - Lecture 3

In this course we will study data-driven decision problems: optimization problems for which the relation between decision and outcome is unknown upfront, and thus has to be learned on-the-fly from accumulating data. This type of problems has an intrinsic tension between statistical goals a

From playlist Thematic Program on Stochastic Modeling: A Focus on Pricing & Revenue Management

Francis Bach: Large-scale machine learning and convex optimization 2/2

Abstract: Many machine learning and signal processing problems are traditionally cast as convex optimization problems. A common difficulty in solving these problems is the size of the data, where there are many observations ("large n") and each of these is large ("large p"). In this settin

From playlist Probability and Statistics

"Data-Driven Optimization in Pricing and Revenue Management" by Arnoud den Boer - Lecture 2

In this course we will study data-driven decision problems: optimization problems for which the relation between decision and outcome is unknown upfront, and thus has to be learned on-the-fly from accumulating data. This type of problems has an intrinsic tension between statistical goals a

From playlist Thematic Program on Stochastic Modeling: A Focus on Pricing & Revenue Management

Francis Bach : Large-scale machine learning and convex optimization 1/2

Abstract: Many machine learning and signal processing problems are traditionally cast as convex optimization problems. A common difficulty in solving these problems is the size of the data, where there are many observations ("large n") and each of these is large ("large p"). In this settin

From playlist Probability and Statistics

Viswanath Nagarajan: Stochastic load balancing on unrelated machines

The lecture was held within the framework of the follow-up workshop to the Hausdorff Trimester Program: Combinatorial Optimization. Abstract: We consider the unrelated machine scheduling problem when job processing-times are stochastic. We provide the first constant factor approximation

From playlist Follow-Up-Workshop "Combinatorial Optimization"

Introduction to the paper https://arxiv.org/abs/2002.06707

From playlist Research

Stochastic Gradient Descent: where optimization meets machine learning- Rachel Ward

2022 Program for Women and Mathematics: The Mathematics of Machine Learning Topic: Stochastic Gradient Descent: where optimization meets machine learning Speaker: Rachel Ward Affiliation: University of Texas, Austin Date: May 26, 2022 Stochastic Gradient Descent (SGD) is the de facto op

From playlist Mathematics

Elias Khalil - Neur2SP: Neural Two-Stage Stochastic Programming - IPAM at UCLA

Recorded 02 March 2023. Elias Khalil of the University of Toronto presents "Neur2SP: Neural Two-Stage Stochastic Programming" at IPAM's Artificial Intelligence and Discrete Optimization Workshop. Abstract: Stochastic Programming is a powerful modeling framework for decision-making under un

From playlist 2023 Artificial Intelligence and Discrete Optimization

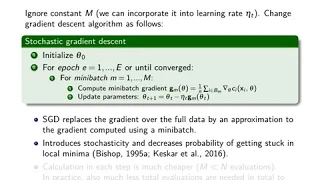

25. Stochastic Gradient Descent

MIT 18.065 Matrix Methods in Data Analysis, Signal Processing, and Machine Learning, Spring 2018 Instructor: Suvrit Sra View the complete course: https://ocw.mit.edu/18-065S18 YouTube Playlist: https://www.youtube.com/playlist?list=PLUl4u3cNGP63oMNUHXqIUcrkS2PivhN3k Professor Suvrit Sra g

From playlist MIT 18.065 Matrix Methods in Data Analysis, Signal Processing, and Machine Learning, Spring 2018

Relaxing the I.I.D. Assumption: Adaptive Minimax Optimal Sequential Prediction... - Jeffrey Negrea

Seminar on Theoretical Machine Learning Topic: Relaxing the I.I.D. Assumption: Adaptive Minimax Optimal Sequential Prediction with Expert Advice Speaker: Jeffrey Negrea Affiliation: University of Toronto Date: July 14, 2020 For more video please visit http://video.ias.edu

From playlist Mathematics

Jorge Nocedal: "Tutorial on Optimization Methods for Machine Learning, Pt. 3"

Graduate Summer School 2012: Deep Learning, Feature Learning "Tutorial on Optimization Methods for Machine Learning, Pt. 3" Jorge Nocedal, Northwestern University Institute for Pure and Applied Mathematics, UCLA July 18, 2012 For more information: https://www.ipam.ucla.edu/programs/summ

From playlist GSS2012: Deep Learning, Feature Learning

Pratik Chaudhari: "Unraveling the mysteries of stochastic gradient descent on deep neural networks"

New Deep Learning Techniques 2018 "Unraveling the mysteries of stochastic gradient descent on deep neural networks" Pratik Chaudhari, University of California, Los Angeles (UCLA) Abstract: Stochastic gradient descent (SGD) is widely believed to perform implicit regularization when used t

From playlist New Deep Learning Techniques 2018

Deep Learning Lecture 4.3 - Stochastic Gradient Descent

Deep Learning Lecture: Optimization Methods - Stochastic Gradient Descent (SGD) - SGD with Momentum

From playlist Deep Learning Lecture

Paolo Guasoni, Lesson II - 19 december 2017

QUANTITATIVE FINANCE SEMINARS @ SNS PROF. PAOLO GUASONI TOPICS IN PORTFOLIO CHOICE

From playlist Quantitative Finance Seminar @ SNS

Introductory lectures on first-order convex optimization (Lecture 2) by Praneeth Netrapalli

DISCUSSION MEETING : STATISTICAL PHYSICS OF MACHINE LEARNING ORGANIZERS : Chandan Dasgupta, Abhishek Dhar and Satya Majumdar DATE : 06 January 2020 to 10 January 2020 VENUE : Madhava Lecture Hall, ICTS Bangalore Machine learning techniques, especially “deep learning” using multilayer n

From playlist Statistical Physics of Machine Learning 2020

"Diffusion Approximation and Sequential Experimentation" by Victor Araman

We consider a Bayesian sequential experimentation problem. We identify environments in which the average number of experiments that is conducted per unit of time is large and the informativeness of each individual experiment is low. Under such regimes, we derive a diffusion approximation f

From playlist Thematic Program on Stochastic Modeling: A Focus on Pricing & Revenue Management

Statistical aspects of stochastic algorithms for entropic (...) - Bigot - Workshop 2 - CEB T1 2019

Jérémie Bigot (Univ. Bordeaux) / 12.03.2019 Statistical aspects of stochastic algorithms for entropic optimal transportation between probability measures. This talk is devoted to the stochastic approximation of entropically regularized Wasserstein distances between two probability measu

From playlist 2019 - T1 - The Mathematics of Imaging