The rising demand for bioprinting has fueled fresh innovation in the field. 🧐 The world of 3D printing is one of the most exciting sectors as well as one of the most practical and useful. 🙌 Printing human skin is the next step to evolving 3D printing. ⚡🦴 Watch the video to learn more ab

From playlist Radical Innovations

Is 3D printing a revolution or just a trend?

Additive manufacturing and 3D printing can reshape our future. Companies are now using these technologies to print everything from fully functional cars to Michelin-stared dinners. Watch this video to learn more about how 3d printing and additive manufacturing might change the future! T

From playlist Radical Innovations

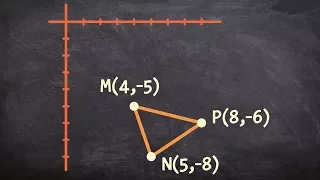

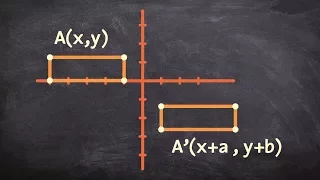

Shifting a triangle using a transformation vector

👉 Learn how to apply transformations of a figure and on a plane. We will do this by sliding the figure based on the transformation vector or directions of translations. When performing a translation we are sliding a given figure up, down, left or right. The orientation and size of the fi

From playlist Transformations

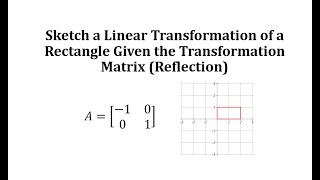

Sketch a Linear Transformation of a Rectangle Given the Transformation Matrix (Reflection)

This video explains 2 ways to graph a linear transformation of a rectangle on the coordinate plane.

From playlist Matrix (Linear) Transformations

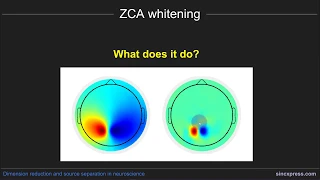

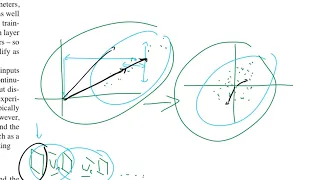

Prewhitening with zero-phase component analysis (ZCA)

This is part of an online course on covariance-based dimension-reduction and source-separation methods for multivariate data. The course is appropriate as an intermediate applied linear algebra course, or as a practical tutorial on multivariate neuroscience data analysis. More info here:

From playlist Dimension reduction and source separation

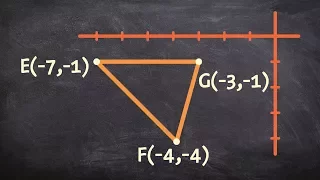

How to translate a triangle using a transformation vector

👉 Learn how to apply transformations of a figure and on a plane. We will do this by sliding the figure based on the transformation vector or directions of translations. When performing a translation we are sliding a given figure up, down, left or right. The orientation and size of the fi

From playlist Transformations

Stéphane Mallat: "Deep Generative Networks as Inverse Problems"

New Deep Learning Techniques 2018 "Deep Generative Networks as Inverse Problems" Stéphane Mallat, École Normale Supérieure Abstract: Generative Adversarial Networks and Variational Auto-Encoders provide impressive image generations from Gaussian white noise, which are not well understood

From playlist New Deep Learning Techniques 2018

If you are interested in learning more about this topic, please visit http://www.gcflearnfree.org/ to view the entire tutorial on our website. It includes instructional text, informational graphics, examples, and even interactives for you to practice and apply what you've learned.

From playlist 3D Printing

How Science Is Improving Dental Health | The Truth About Your Teeth | Spark

An estimated 84% of adults have unhealthy teeth or gums, one in 10 of us aren’t even registered with a dentist, and with the highest dental fees in Europe an estimated 200,000 Britons a year resort to DIY home dentistry. This revealing two-part documentary series explores life on the fron

From playlist Spark Top Docs

👉 Learn about dilations. Dilation is the transformation of a shape by a scale factor to produce an image that is similar to the original shape but is different in size from the original shape. A dilation that creates a larger image is called an enlargement or a stretch while a dilation tha

From playlist Transformations

Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift

https://arxiv.org/abs/1502.03167 Abstract: Training Deep Neural Networks is complicated by the fact that the distribution of each layer's inputs changes during training, as the parameters of the previous layers change. This slows down the training by requiring lower learning rates and car

From playlist Deep Learning Architectures

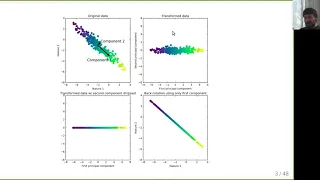

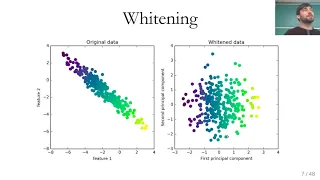

Applied ML 2020 - 13 - Dimensionality reduction

PCA, linear discriminant analysis, manifold learning

From playlist Applied Machine Learning 2020

Hybrid sparse stochastic processes and the resolution of (...) - Unser - Workshop 2 - CEB T1 2019

Michael Unser (EPFL) / 12.03.2019 Hybrid sparse stochastic processes and the resolution of linear inverse problems. Sparse stochastic processes are continuous-domain processes that are specified as solutions of linear stochastic differential equations driven by white Lévy noise. These p

From playlist 2019 - T1 - The Mathematics of Imaging

Mérouane Debbah - Random Matrices for 5G: From Shannon to Wiener

Huawei-IHÉS Workshop on Mathematical Sciences Tuesday, May 5th 2015

From playlist Huawei-IHÉS Workshop on Mathematical Sciences

Summer App Space: Lecture 12 - Dr. J. Graef Rollins - 7/14/2017

The Summer App Space is a summer program for LA students and teachers to learn programming while getting paid to do fun space-related projects. Learn more: http://summerappspace.com

From playlist Summer App Space - 2017

Photoshop Tutorial - Using FREE TRANSFORM to resize

Scale, skew, and rotate layers with Adobe Photoshop's free transform. Watch the full course to build more Photoshop skills: https://www.linkedin.com/learning/photoshop-2020-essential-training-the-basics?trk=sme-youtube_photoshop2020esstbasics-scaling-skewing-rotating-with-free-transform_le

From playlist Adobe Photoshop

Hong Van Le (7/26/22): Supervised learning with probabilistic morphisms and kernel mean embedding

Abstract: In my talk I shall explain a unified model of supervised learning using the concept of probabilistic morphisms. Then I shall define an instantaneous least squares loss function for a unified model of supervised learning via kernel mean embedding, which coincides with the 0-1 loss

From playlist Applied Geometry for Data Sciences 2022

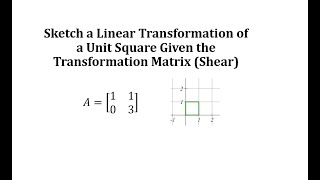

Sketch a Linear Transformation of a Unit Square Given the Transformation Matrix (Shear)

This video explains 2 ways to graph a linear transformation of a unit square on the coordinate plane.

From playlist Matrix (Linear) Transformations

What is a transformation vector

👉 Learn how to apply transformations of a figure and on a plane. We will do this by sliding the figure based on the transformation vector or directions of translations. When performing a translation we are sliding a given figure up, down, left or right. The orientation and size of the fi

From playlist Transformations

Applied Machine Learning 2019 - Lecture 14 - Dimensionality Reduction

Principal Component Analysis, Linear Discriminant Analysis, Manifold Learning, T-SNE Slides and more materials are on the class website: https://www.cs.columbia.edu/~amueller/comsw4995s19/schedule/

From playlist Applied Machine Learning - Spring 2019