Numerical Optimization Algorithms: Gradient Descent

In this video we discuss a general framework for numerical optimization algorithms. We will see that this involves choosing a direction and step size at each step of the algorithm. In this video, we investigate how to choose a direction using the gradient descent method. Future videos d

From playlist Optimization

Numerical Optimization Algorithms: Step Size Via Line Minimization

In this video we discuss how to choose the step size in a numerical optimization algorithm using the Line Minimization technique. Topics and timestamps: 0:00 – Introduction 2:30 – Single iteration of line minimization 22:58 – Numerical results with line minimization 30:13 – Challenges wit

From playlist Optimization

Numerical Optimization Algorithms: Constant and Diminishing Step Size

In this video we discuss two simple techniques for choosing the step size in a numerical optimization algorithm. Topics and timestamps: 0:00 – Introduction 1:15 – Constant step size 14:37 – Diminishing step size 25:05 - Summary All Optimization videos in a single playlist (https://www.

From playlist Optimization

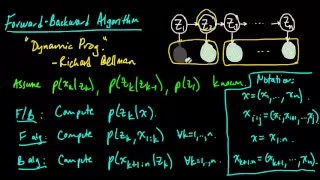

(ML 14.6) Forward-Backward algorithm for HMMs

The Forward-Backward algorithm for a hidden Markov model (HMM). How the Forward algorithm and Backward algorithm work together. Discussion of applications (inference, parameter estimation, sampling from the posterior, etc.).

From playlist Machine Learning

Lookahead Optimizer: k steps forward, 1 step back

Speaker/author: Michael Zhang For details including paper and slides, please visit https://aisc.ai.science/events/2019-09-22-lookahead-optimizer

From playlist Math and Foundations

Mean Solution - Intro to Algorithms

This video is part of an online course, Intro to Algorithms. Check out the course here: https://www.udacity.com/course/cs215.

From playlist Introduction to Algorithms

What Is Mathematical Optimization?

A gentle and visual introduction to the topic of Convex Optimization. (1/3) This video is the first of a series of three. The plan is as follows: Part 1: What is (Mathematical) Optimization? (https://youtu.be/AM6BY4btj-M) Part 2: Convexity and the Principle of (Lagrangian) Duality (

From playlist Convex Optimization

Implementing Shortest Distance - Intro to Algorithms

This video is part of an online course, Intro to Algorithms. Check out the course here: https://www.udacity.com/course/cs215.

From playlist Introduction to Algorithms

From playlist CS294-112 Deep Reinforcement Learning Sp17

Lecture 12 | MIT 6.832 Underactuated Robotics, Spring 2009

Lecture 12: Walking (continued) Instructor: Russell Tedrake See the complete course at: http://ocw.mit.edu/6-832s09 License: Creative Commons BY-NC-SA More information at http://ocw.mit.edu/terms More courses at http://ocw.mit.edu

From playlist MIT 6.832 Underactuated Robotics, Spring 2009

Reduction: Long and Simple Path - Intro to Algorithms

This video is part of an online course, Intro to Algorithms. Check out the course here: https://www.udacity.com/course/cs215.

From playlist Introduction to Algorithms

Model-Based Control of Humanoid Walking

Brian Kim and Sebastian Castro discuss the theoretical foundations of humanoid walking using the linear inverted pendulum model (LIPM) with MATLAB® and Simulink®. First, Brian and Sebastian introduce the basics of generating a stable walking pattern with the linear inverted pendulum model

From playlist Modeling, Simulation and Control: MATLAB and Simulink Robotics Arena

Near log-convexity of measured heat in (discrete) time and consequences - Mert Sağlam

Computer Science/Discrete Mathematics Seminar I Topic: Near log-convexity of measured heat in (discrete) time and consequences Speaker: Mert Sağlam Affiliation: University of Washington Date: March 11, 2019 For more video please visit http://video.ias.edu

From playlist Mathematics

Stanford Seminar - Robotics algorithms that take people into account

February 17, 2023 Anca Dragan of UC Berkeley I discovered AI by reading “Artificial Intelligence: A Modern Approach” (AIMA). What drew me in was the concept that you could specify a goal or objective for a robot, and it would be able to figure out on its own how to sequence actions in or

From playlist Stanford AA289 - Robotics and Autonomous Systems Seminar

Dynamics-Aware Unsupervised Discovery of Skills (Paper Explained)

This RL framework can discover low-level skills all by itself without any reward. Even better, at test time it can compose its learned skills and reach a specified goal without any additional learning! Warning: Math-heavy! OUTLINE: 0:00 - Motivation 2:15 - High-Level Overview 3:20 - Model

From playlist Papers Explained

PyTorch Python Tutorial | Deep Learning Using PyTorch | Image Classifier Using PyTorch | Edureka

( ** Deep Learning Training: https://goo.gl/4it6DE ** ) This Edureka PyTorch Tutorial video (Blog: https://goo.gl/4zxMfU) will help you in understanding various important basics of PyTorch. It also includes a use-case in which we will create an image classifier that will predict the accura

From playlist Deep Learning With TensorFlow Videos

Ultimate Guide To Scaling ML Models - Megatron-LM | ZeRO | DeepSpeed | Mixed Precision

🚀 Sign up for AssemblyAI's speech API using my link 🚀 https://www.assemblyai.com/?utm_source=youtube&utm_medium=social&utm_campaign=theaiepiphany 👨👩👧👦 Join our Discord community 👨👩👧👦 https://discord.gg/peBrCpheKE In this video I show you what it takes to scale ML models up to tril

From playlist Miscellaneous

Geoffrey Woollard - Stochastic inference and Computational Optimal Transport in 3D heterogeneity

Recorded 14 November 2022. Geoffrey Woollard of the University of British Columbia presents "Using Stochastic variational inference and Computational Optimal Transport for problems in 3D heterogeneity" at IPAM's Cryo-Electron Microscopy and Beyond Workshop. Abstract: We present two computa

From playlist 2022 Cryo-Electron Microscopy and Beyond

Ex: Quadratic Function Application - Horizontal Distance and Vertical Height

This video provides an example of an application of a quadratic function that gives the vertical height of an object as a function of the horizontal distance traveled. Site: http://mathispower4u.com Search: http://mathispower4u.wordpress.com

From playlist Applications of Quadratic Equations

Search 2 - A* | Stanford CS221: Artificial Intelligence (Autumn 2019)

For more information about Stanford's Artificial Intelligence professional and graduate programs visit: https://stanford.io/ai Topics: Problem-solving as finding paths in graphs, A*, consistent heuristics, Relaxation Percy Liang, Associate Professor & Dorsa Sadigh, Assistant Professor - S

From playlist Stanford CS221: Artificial Intelligence: Principles and Techniques | Autumn 2021