Prob & Stats - Markov Chains (8 of 38) What is a Stochastic Matrix?

Visit http://ilectureonline.com for more math and science lectures! In this video I will explain what is a stochastic matrix. Next video in the Markov Chains series: http://youtu.be/YMUwWV1IGdk

From playlist iLecturesOnline: Probability & Stats 3: Markov Chains & Stochastic Processes

Prob & Stats - Markov Chains (9 of 38) What is a Regular Matrix?

Visit http://ilectureonline.com for more math and science lectures! In this video I will explain what is a regular matrix. Next video in the Markov Chains series: http://youtu.be/loBUEME5chQ

From playlist iLecturesOnline: Probability & Stats 3: Markov Chains & Stochastic Processes

Basic stochastic simulation b: Stochastic simulation algorithm

(C) 2012-2013 David Liao (lookatphysics.com) CC-BY-SA Specify system Determine duration until next event Exponentially distributed waiting times Determine what kind of reaction next event will be For more information, please search the internet for "stochastic simulation algorithm" or "kin

From playlist Probability, statistics, and stochastic processes

Recorded: Spring 2014 Lecturer: Dr. Erin M. Buchanan Materials: created for Memory and Cognition (PSY 422) using Smith and Kosslyn (2006) Lecture materials and assignments available at statisticsofdoom.com. https://statisticsofdoom.com/page/other-courses/

From playlist PSY 422 Memory and Cognition with Dr. B

Lecture 7E : Long term short term memory

Neural Networks for Machine Learning by Geoffrey Hinton [Coursera 2013] Lecture 7E : Long term short term memory

From playlist Neural Networks for Machine Learning by Professor Geoffrey Hinton [Complete]

Brain Teasers: 10. Winning in a Markov chain

In this exercise we use the absorbing equations for Markov Chains, to solve a simple game between two players. The Zoom connection was not very stable, hence there are a few audio problems. Sorry.

From playlist Brain Teasers and Quant Interviews

Probabilistic methods in statistical physics for extreme statistics... - 18 September 2018

http://crm.sns.it/event/420/ Probabilistic methods in statistical physics for extreme statistics and rare events Partially supported by UFI (Université Franco-Italienne) In this first introductory workshop, we will present recent advances in analysis, probability of rare events, search p

From playlist Centro di Ricerca Matematica Ennio De Giorgi

Guilherme Ost & et Claudia Vargas - Retrieving the structure of probabilistic sequences...

Retrieving the structure of probabilistic sequences of auditory stimuli from electroencephalographic (EEG) signals ---------------------------------- Institut Henri Poincaré, 11 rue Pierre et Marie Curie, 75005 PARIS http://www.ihp.fr/ Rejoingez les réseaux sociaux de l'IHP pour être au c

From playlist Workshop "Workshop on Mathematical Modeling and Statistical Analysis in Neuroscience" - January 31st - February 4th, 2022

Benoîte de Saporta: Stochastic modeling for population dynamics: simulation and inference - Part 1

The aim of this course is to present some examples of stochastic models suitable for population dynamics. The first part will introduce a class of continuous time models called piecewise deterministic Markov processes (PDMPs). Their trajectories are deterministic with jumps at random times

From playlist Probability and Statistics

Statistical Rethinking - Lecture 11

Lecture 11 - Markov chain Monte Carlo - Statistical Rethinking: A Bayesian Course with R Examples

From playlist Statistical Rethinking Winter 2015

Lecture 11/16 : Hopfield nets and Boltzmann machines

Neural Networks for Machine Learning by Geoffrey Hinton [Coursera 2013] 11A Hopfield Nets 11B Dealing with spurious minima in Hopfield Nets 11C Hopfield Nets with hidden units 11D Using stochastic units to improve search 11E How a Boltzmann Machine models data

From playlist Neural Networks for Machine Learning by Professor Geoffrey Hinton [Complete]

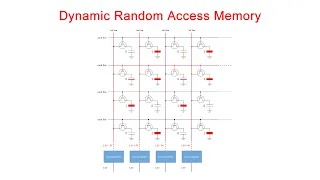

Dynamic Random Access Memory (DRAM). Part 1: Memory Cell Arrays

This is the first in a series of computer science videos is about the fundamental principles of Dynamic Random Access Memory, DRAM, and the essential concepts of DRAM operation. This particular video covers the structure and workings of the DRAM memory cell. That is, the basic unit of st

From playlist Random Access Memory

The mathematics of natural algorithms - Bernard Chazelle

Computer Science/Discrete Mathematics Seminar Topic: The mathematics of natural algorithms Speaker: Bernard Chazelle Affiliation:Princeton University Date: November 14, 2016 For more video, visit http://video.ias.edu

From playlist Mathematics

Shannon 100 - 26/10/2016 - Elisabeth GASSIAT

Entropie, compression et statistique Elisabeth Gassiat (Université de Paris-Sud) Claude Shannon est l'inventeur de la théorie de l'information. Il a introduit la notion d'entropie comme mesure de l'information contenue dans un message vu comme provenant d'une source stochastique et démon

From playlist Shannon 100

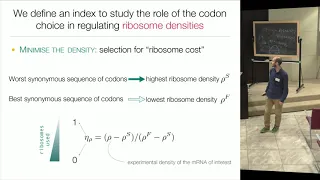

Reverse mathematical methods for reconstructing molecular dynamics... - 18 October 2018

http://crm.sns.it/event/425/ Reverse mathematical methods for reconstructing molecular dynamics in single cell The latest developments in sequencing and high resolution imaging have led to a recent surge of datasets, requiring new mathematical and statistical methods to analyze the biolog

From playlist Centro di Ricerca Matematica Ennio De Giorgi

(ML 14.3) Markov chains (discrete-time) (part 2)

Definition of a (discrete-time) Markov chain, and two simple examples (random walk on the integers, and a oversimplified weather model). Examples of generalizations to continuous-time and/or continuous-space. Motivation for the hidden Markov model.

From playlist Machine Learning

Machine Learning from First Principles, with PyTorch AutoDiff — Topic 66 of ML Foundations

#MLFoundations #Calculus #MachineLearning In preceding videos in this series, we learned all the most essential differential calculus theory needed for machine learning. In this epic video, it all comes together to enable us to perform machine learning from first principles and fit a line

From playlist Calculus for Machine Learning

Francois Baccelli: High dimensional stochastic geometry in the Shannon regime

This talk will focus on Euclidean stochastic geometry in the Shannon regime. In this regime, the dimension n of the Euclidean space tends to infinity, point processes have intensities which are exponential functions of n, and the random compact of interest sets have diameters of order squa

From playlist Workshop: High dimensional spatial random systems

Regenerative sequences and processes and MCMC by Krishna Athreya

Large deviation theory in statistical physics: Recent advances and future challenges DATE: 14 August 2017 to 13 October 2017 VENUE: Madhava Lecture Hall, ICTS, Bengaluru Large deviation theory made its way into statistical physics as a mathematical framework for studying equilibrium syst

From playlist Large deviation theory in statistical physics: Recent advances and future challenges