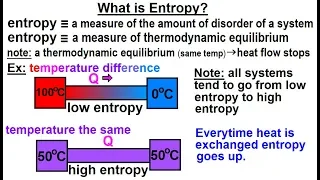

Physics - Thermodynamics 2: Ch 32.7 Thermo Potential (10 of 25) What is Entropy?

Visit http://ilectureonline.com for more math and science lectures! In this video explain and give examples of what is entropy. 1) entropy is a measure of the amount of disorder (randomness) of a system. 2) entropy is a measure of thermodynamic equilibrium. Low entropy implies heat flow t

From playlist PHYSICS 32.7 THERMODYNAMIC POTENTIALS

Entropy is often taught as a measure of how disordered or how mixed up a system is, but this definition never really sat right with me. How is "disorder" defined and why is one way of arranging things any more disordered than another? It wasn't until much later in my physics career that I

From playlist Thermal Physics/Statistical Physics

Teach Astronomy - Entropy of the Universe

http://www.teachastronomy.com/ The entropy of the universe is a measure of its disorder or chaos. If the laws of thermodynamics apply to the universe as a whole as they do to individual objects or systems within the universe, then the fate of the universe must be to increase in entropy.

From playlist 23. The Big Bang, Inflation, and General Cosmology 2

Topics in Combinatorics lecture 10.0 --- The formula for entropy

In this video I present the formula for the entropy of a random variable that takes values in a finite set, prove that it satisfies the entropy axioms, and prove that it is the only formula that satisfies the entropy axioms. 0:00 The formula for entropy and proof that it satisfies the ax

From playlist Topics in Combinatorics (Cambridge Part III course)

(IC 3.4) Remark - an alternate proof

The non-negativity of relative entropy can also be used to show that the expected codeword length of a symbol code is bounded below by the entropy of the source. A playlist of these videos is available at: http://www.youtube.com/playlist?list=PLE125425EC837021F

From playlist Information theory and Coding

Physics - Thermodynamics: (1 of 5) Entropy - Basic Definition

Visit http://ilectureonline.com for more math and science lectures! In this video I will explain and help you understand entropy.

From playlist PHYSICS - THERMODYNAMICS

Entropy Explained In 60 Seconds!! #Thermodynamics #Chemistry #Physics #Math #NicholasGKK #Shorts

From playlist Heat and Chemistry

Entropy's role on Thermodynamics

Thermodynamics depends on enthalpy, but it also depends on entropy. Entropy is a quantitative measure of the disorder of a system. We can see how reactions tend to go from order to disorder. At best they can switch between the two reversibly (second law of thermodynamics). There exist reac

From playlist Materials Sciences 101 - Introduction to Materials Science & Engineering 2020

Entropy production during free expansion of an ideal gas by Subhadip Chakraborti

Abstract: According to the second law, the entropy of an isolated system increases during its evolution from one equilibrium state to another. The free expansion of a gas, on removal of a partition in a box, is an example where we expect to see such an increase of entropy. The constructi

From playlist Seminar Series

Computational Entropy - Salil Vadhan

Salil Vadhan Harvard University; Visiting Researcher Microsoft Research SVC; Visiting Scholar Stanford University April 23, 2012 Shannon's notion of entropy measures the amount of "randomness" in a process. However, to an algorithm with bounded resources, the amount of randomness can appea

From playlist Mathematics

Nexus Trimester - Mokshay Madiman (University of Delaware)

The Stam region, or the differential entropy region for sums of independent random vectors Mokshay Madiman (University of Delaware) February 25, 2016 Abstract: Define the Stam region as the subset of the positive orthant in [Math Processing Error] that arises from considering entropy powe

From playlist Nexus Trimester - 2016 - Fundamental Inequalities and Lower Bounds Theme

Entropy of Hawking Radiation Colloquium

From playlist Natural Sciences

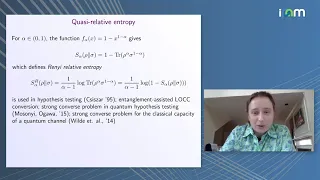

Anna Vershynina: "Quasi-relative entropy: the closest separable state & reversed Pinsker inequality"

Entropy Inequalities, Quantum Information and Quantum Physics 2021 "Quasi-relative entropy: the closest separable state and the reversed Pinsker inequality" Anna Vershynina - University of Houston Abstract: It is well known that for pure states the relative entropy of entanglement is equ

From playlist Entropy Inequalities, Quantum Information and Quantum Physics 2021

Entropy Equipartition along almost Geodesics in Negatively Curved Groups by Amos Nevo

PROGRAM : ERGODIC THEORY AND DYNAMICAL SYSTEMS (HYBRID) ORGANIZERS : C. S. Aravinda (TIFR-CAM, Bengaluru), Anish Ghosh (TIFR, Mumbai) and Riddhi Shah (JNU, New Delhi) DATE : 05 December 2022 to 16 December 2022 VENUE : Ramanujan Lecture Hall and Online The programme will have an emphasis

From playlist Ergodic Theory and Dynamical Systems 2022

Rocío González Díaz (3/16/22): Persistent entropy, a tool for topologically summarizing data.

Title: Introducing persistent entropy, a tool for topologically summarizing data. Properties and applications. Abstract: In this talk, I will introduce an entropy-based summarization of persistence barcodes. We will study some of its properties, including its stability to small perturb

From playlist AATRN 2022

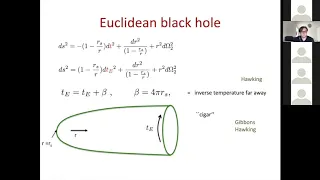

A modern take on the information paradox.... (Lecture - 03) by Ahmed Almheiri

INFOSYS-ICTS STRING THEORY LECTURES A MODERN TAKE ON THE INFORMATION PARADOX AND PROGRESS TOWARDS ITS RESOLUTION SPEAKER: Ahmed Almheiri (Institute for Advanced Study, Princeton) DATE: 30 September 2019 to 03 October 2019 VENUE: Emmy Noether Seminar Room, ICTS Bangalore Lecture 1: Mond

From playlist Infosys-ICTS String Theory Lectures

Entropy accumulation - O. Fawzi - Workshop 2 - CEB T3 2017

Omar Fawzi / 23.10.17 Entropy accumulation We ask the question whether entropy accumulates, in the sense that the operationally relevant total uncertainty about an n-partite system A=(A1,…An) corresponds to the sum of the entropies of its parts Ai. The Asymptotic Equipartition Property i

From playlist 2017 - T3 - Analysis in Quantum Information Theory - CEB Trimester

Equidistribution of Measures with High Entropy for General Surface Diffeomorphisms by Omri Sarig

PROGRAM : ERGODIC THEORY AND DYNAMICAL SYSTEMS (HYBRID) ORGANIZERS : C. S. Aravinda (TIFR-CAM, Bengaluru), Anish Ghosh (TIFR, Mumbai) and Riddhi Shah (JNU, New Delhi) DATE : 05 December 2022 to 16 December 2022 VENUE : Ramanujan Lecture Hall and Online The programme will have an emphasis

From playlist Ergodic Theory and Dynamical Systems 2022

Topological entropy of Hamiltonian diffeomorphisms: a persistence homology and... - Erman Cineli

Joint IAS/Princeton/Montreal/Paris/Tel-Aviv Symplectic Geometry Zoominar Topic: Topological entropy of Hamiltonian diffeomorphisms: a persistence homology and Floer theory perspective Speaker: Erman Cineli Date: February 25, 2022 In this talk I will introduce barcode entropy and discuss

From playlist Mathematics

Maxwell-Boltzmann distribution

Entropy and the Maxwell-Boltzmann velocity distribution. Also discusses why this is different than the Bose-Einstein and Fermi-Dirac energy distributions for quantum particles. My Patreon page is at https://www.patreon.com/EugeneK 00:00 Maxwell-Boltzmann distribution 02:45 Higher Temper

From playlist Physics