Articles containing proofs | Convex analysis | Statistical inequalities | Theorems in analysis | Inequalities | Theorems involving convexity | Probabilistic inequalities

Jensen's inequality

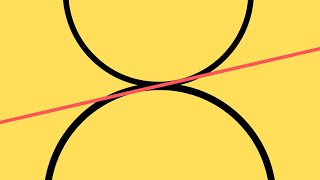

In mathematics, Jensen's inequality, named after the Danish mathematician Johan Jensen, relates the value of a convex function of an integral to the integral of the convex function. It was proved by Jensen in 1906, building on an earlier proof of the same inequality for doubly-differentiable functions by Otto Hölder in 1889. Given its generality, the inequality appears in many forms depending on the context, some of which are presented below. In its simplest form the inequality states that the convex transformation of a mean is less than or equal to the mean applied after convex transformation; it is a simple corollary that the opposite is true of concave transformations. Jensen's inequality generalizes the statement that the secant line of a convex function lies above the graph of the function, which is Jensen's inequality for two points: the secant line consists of weighted means of the convex function (for t ∈ [0,1]), while the graph of the function is the convex function of the weighted means, Thus, Jensen's inequality is In the context of probability theory, it is generally stated in the following form: if X is a random variable and φ is a convex function, then The difference between the two sides of the inequality, , is called the . (Wikipedia).