(ML 14.4) Hidden Markov models (HMMs) (part 1)

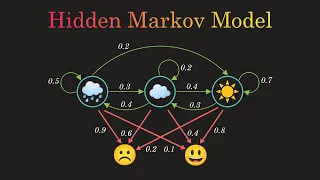

Definition of a hidden Markov model (HMM). Description of the parameters of an HMM (transition matrix, emission probability distributions, and initial distribution). Illustration of a simple example of a HMM.

From playlist Machine Learning

(ML 14.5) Hidden Markov models (HMMs) (part 2)

Definition of a hidden Markov model (HMM). Description of the parameters of an HMM (transition matrix, emission probability distributions, and initial distribution). Illustration of a simple example of a HMM.

From playlist Machine Learning

Hidden Markov Model Clearly Explained! Part - 5

So far we have discussed Markov Chains. Let's move one step further. Here, I'll explain the Hidden Markov Model with an easy example. I'll also show you the underlying mathematics. #markovchain #datascience #statistics For more videos please subscribe - http://bit.ly/normalizedNERD Mar

From playlist Markov Chains Clearly Explained!

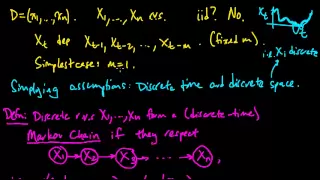

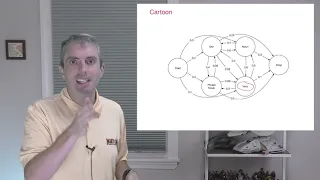

(ML 14.2) Markov chains (discrete-time) (part 1)

Definition of a (discrete-time) Markov chain, and two simple examples (random walk on the integers, and a oversimplified weather model). Examples of generalizations to continuous-time and/or continuous-space. Motivation for the hidden Markov model.

From playlist Machine Learning

How to Estimate the Parameters of a Hidden Markov Model from Data [Lecture]

This is a single lecture from a course. If you you like the material and want more context (e.g., the lectures that came before), check out the whole course: https://boydgraber.org/teaching/CMSC_723/ (Including homeworks and reading.) Intro to HMMs: https://youtu.be/0gu1BDj5_Kg Music: h

From playlist Computational Linguistics I

How Hidden Markov Models (HMMs) can Label as Sentence's Parts of Speech [Lecture]

This is a single lecture from a course. If you you like the material and want more context (e.g., the lectures that came before), check out the whole course: https://boydgraber.org/teaching/CMSC_723/ (Including homeworks and reading.) Music: https://soundcloud.com/alvin-grissom-ii/review

From playlist Computational Linguistics I

Hidden Markov Model : Data Science Concepts

All about the Hidden Markov Model in data science / machine learning

From playlist Data Science Concepts

Data Science - Part XIII - Hidden Markov Models

For downloadable versions of these lectures, please go to the following link: http://www.slideshare.net/DerekKane/presentations https://github.com/DerekKane/YouTube-Tutorials This lecture provides an overview on Markov processes and Hidden Markov Models. We will start off by going throug

From playlist Data Science

(ML 14.3) Markov chains (discrete-time) (part 2)

Definition of a (discrete-time) Markov chain, and two simple examples (random walk on the integers, and a oversimplified weather model). Examples of generalizations to continuous-time and/or continuous-space. Motivation for the hidden Markov model.

From playlist Machine Learning

Heather Shappell - State change estimation in dynamic functional connectivity w/ semi-Markov models

Recorded 29 August 2022. Heather Shappell of Wake Forest University presents "Improved state change estimation in dynamic functional connectivity using hidden semi-Markov models" at IPAM's Reconstructing Network Dynamics from Data: Applications to Neuroscience and Beyond. Abstract: The stu

From playlist 2022 Reconstructing Network Dynamics from Data: Applications to Neuroscience and Beyond

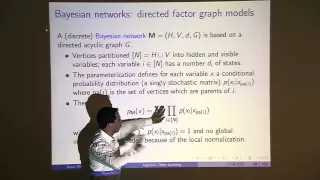

Jason Morton: "An Algebraic Perspective on Deep Learning, Pt. 2"

Graduate Summer School 2012: Deep Learning, Feature Learning "An Algebraic Perspective on Deep Learning, Pt. 2" Jason Morton, Pennsylvania State University Institute for Pure and Applied Mathematics, UCLA July 20, 2012 For more information: https://www.ipam.ucla.edu/programs/summer-scho

From playlist GSS2012: Deep Learning, Feature Learning

Turing Lecture: Data Science for Medicine

Medicine 2.0: Using Machine Learning to Transform Medical Practice and Discovery In this talk, Mihaela will present her view of the transformation of medicine through the use of machine learning, and some of her own contributions. This transformation is already being felt in every aspect

From playlist Turing Lectures

Everything you need to know about Machine Learning!

Here is an introduction to Machine Learning. Instead of developing algorithms for every task and subtask to solve a problem, Machine Learning involves teaching a computer to teach itself. There are different types of machine learning problems we may come across. TYPES OF MACHINE LEARNING

From playlist Algorithms and Concepts

Emmanuel Candès: “The Knockoffs Framework: New Statistical Tools for Replicable Selections”

Green Family Lecture Series 2018 “The Knockoffs Framework: New Statistical Tools for Replicable Selections” Emmanuel Candès, Stanford University Abstract: A common problem in modern statistical applications is to select, from a large set of candidates, a subset of variables which are imp

From playlist Public Lectures

Fellow Short Talks: Professor Ruth King, University of Edinburgh

Bio Ruth King is the Thomas Bayesí Chair of Statistics at the University of Edinburgh. She was awarded her PhD in 2001 from the University of Bristol. She then held positions at the Universities of Cambridge (PDRA; 2001-3) and St Andrews (lecturer 2003-10; reader 2010-15) before taking up

From playlist Short Talks

CMU Neural Nets for NLP 2017 (18): Unsupervised Learning of Structure

This lecture (by Graham Neubig) for CMU CS 11-747, Neural Networks for NLP (Fall 2017) covers: * Learning Features vs. Learning Structure * Unsupervised Learning Methods * Design Decisions for Unsupervised Models * Examples of Unsupervised Learning Slides: http://phontron.com/class/nn4nl

From playlist CMU Neural Nets for NLP 2017

Joshua Bon - Twisted: Improving particle filters by learning modified paths

Dr Joshua Bon (QUT) presents "Twisted: Improving particle filters by learning modified paths", 22 April 2022.

From playlist Statistics Across Campuses

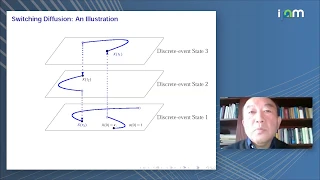

Gang George Yin: "High-Dimensional HJBs: Mean-Field Limits and McKean-Vlasov Equations"

High Dimensional Hamilton-Jacobi PDEs 2020 Workshop I: High Dimensional Hamilton-Jacobi Methods in Control and Differential Games "High-Dimensional HJBs: Mean-Field Limits and McKean-Vlasov Equations" Gang George Yin, Wayne State University Abstract: In this talk, we will study mean-fiel

From playlist High Dimensional Hamilton-Jacobi PDEs 2020

(ML 14.1) Markov models - motivating examples

Introduction to Markov models, using intuitive examples of applications, and motivating the concept of the Markov chain.

From playlist Machine Learning