This is the third video of a series from the Worldwide Center of Mathematics explaining the basics of vectors. This video explains the precise definition of dot product (also known as scalar product) and shows some examples of calculated dot products. For more math videos, visit our channe

From playlist Basics: Vectors

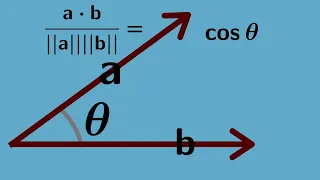

Proof of the Dot Product Theorem

Dot products are essential in a mathematician's toolbox. There is a property of dot products, however, that is often taken for granted: the multiplication of the magnitudes of two vectors by the cosine of the angle between them equals the sum of the multiplication of their respective compo

From playlist Fun

Multivariable Calculus | The dot product.

We present the definition of the dot product as well as a geometric interpretation and some examples. http://www.michael-penn.net http://www.randolphcollege.edu/mathematics/

From playlist Vectors for Multivariable Calculus

Introduction to the Dot Product

Introduction to the Dot Product If you enjoyed this video please consider liking, sharing, and subscribing. You can also help support my channel by becoming a member https://www.youtube.com/channel/UCr7lmzIk63PZnBw3bezl-Mg/join Thank you:)

From playlist Calculus 3

Cross Product and Dot Product: Visual explanation

Visual interpretation of the cross product and the dot product of two vectors. My Patreon page: https://www.patreon.com/EugeneK

From playlist Physics

This shows an interactive illustration that lets you play around with the dot product. The clip is from the book "Immersive Linear Algebra" at http://www.immersivemath.com.

From playlist Chapter 3 - The Dot Product

What is the dot product of two vectors? How is it useful? Free ebook https://bookboon.com/en/introduction-to-vectors-ebook (updated link) Test your understanding via a short quiz http://goo.gl/forms/2SGI5Kvpk9

From playlist Introduction to Vectors

What are the properties of the dot product

http://www.freemathvideos.com In this video series I will show you how to apply the dot product of two vectors and use the product to determine if two vectors are orthogonal or not. The dot product does not produce another vector like scalar multiplication but rather produces a scalar tha

From playlist Vectors

What is the formula for the dot product

http://www.freemathvideos.com In this video series I will show you how to apply the dot product of two vectors and use the product to determine if two vectors are orthogonal or not. The dot product does not produce another vector like scalar multiplication but rather produces a scalar tha

From playlist Vectors

Unveiling the Power of Graphs: Node and Edge Classification w/ GraphSAGE | GraphML

Full code example of Node and Edge classification with GraphSAGE for GraphML. DGL on PyTorch backbone. Graph Neural Networks explained. One of the most popular and widely adopted tasks for graph neural networks is node classification, where each node in the training/validation/test set is

From playlist Node & Edge Classification, Link Prediction w/ GraphML

CODE: GRAPH Link Prediction w/ DGL on Pytorch and PyG Code Example | GraphML | GNN

For Graph ML we make a deep dive to code LINK Prediction on Graph Data sets with DGL and PyG. We examine the main ideas behind LINK Prediction and how to code a link prediction example in PyG and DGL - Deep Graph Library. DGL - Easy Deep Learning on Graphs with framework agnostic coding (e

From playlist Node & Edge Classification, Link Prediction w/ GraphML

Node2vec : TensorFlow + KERAS code in live COLAB | Graph NN 2022

Real-time COLAB to learn Node2vec for Graph representation learning in KERAS implementation for learning low-dimensional embeddings of nodes in a graph, w/ neighborhood-preserving objective. Download your COLAB: https://colab.research.google.com/github/keras-team/keras-io/blob/master/exa

From playlist Word2Vec and Node2vec (pure TensorFlow 2.7 + KERAS)

CS224W: Machine Learning with Graphs | 2021 | Lecture 3.1 - Node Embeddings

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/3Cv1BEU Jure Leskovec Computer Science, PhD From previous lectures we see how we can use machine learning with feature engineering to make predictions on nodes, li

From playlist Stanford CS224W: Machine Learning with Graphs

NJIT Data Science Seminar: Steven Skiena, Stony Brook University

NJIT Institute for Data Science https://datascience.njit.edu/ Word and Graph Embeddings for Machine Learning Steven Skiena, Ph.D. Distinguished Professor Department of Computer Science Stony Brook University Distributed word embeddings (word2vec) provides a powerful way to reduce large

From playlist Talks

Graphormer - Do Transformers Really Perform Bad for Graph Representation? | Paper Explained

❤️ Become The AI Epiphany Patreon ❤️ ► https://www.patreon.com/theaiepiphany ▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬ Paper: Do Transformers Really Perform Bad for Graph Representation? In this video, I cover Graphormer a new transformer model that achieved SOTA results on the OGB large-scale challenge

From playlist Transformers

Tathagata Basak: A monstrous(?) complex hyperbolic orbifold

I will report on progress with Daniel Allcock on the ”Monstrous Proposal”, namely the conjecture: Complex hyperbolic 13-space, modulo a particular discrete group, and with orbifold structure changed in a simple way, has fundamental group equal to (MxM)(semidirect)2, where M is the Monster

From playlist Topology

Lecture 4 – Word Vectors 3 | Stanford CS224U: Natural Language Understanding | Spring 2019

For more information about Stanford’s Artificial Intelligence professional and graduate programs, visit: https://stanford.io/ai Professor Christopher Potts Professor of Linguistics and, by courtesy, Computer Science Director, Stanford Center for the Study of Language and Information http:

From playlist Stanford CS224U: Natural Language Understanding | Spring 2019

Determine the dot product between two vectors

Learn how to determine the dot product of vectors. The dot product of two vectors also called the scalar product of the vectors is the sum of the product of the components of the vectors in each direction. When the magnitudes of the vectors are known and the angle between the vectors is kn

From playlist Vectors

Learn low-dim Embeddings that encode GRAPH structure (data) : "Representation Learning" /arXiv

Optimize your complex Graph Data before applying Neural Network predictions. Automatically learn to encode graph structure into low-dimensional embeddings, using techniques based on deep learning and nonlinear dimensionality reduction. An encoder-decoder perspective, random walk approach

From playlist Learn Graph Neural Networks: code, examples and theory